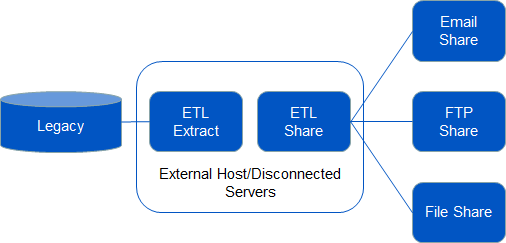

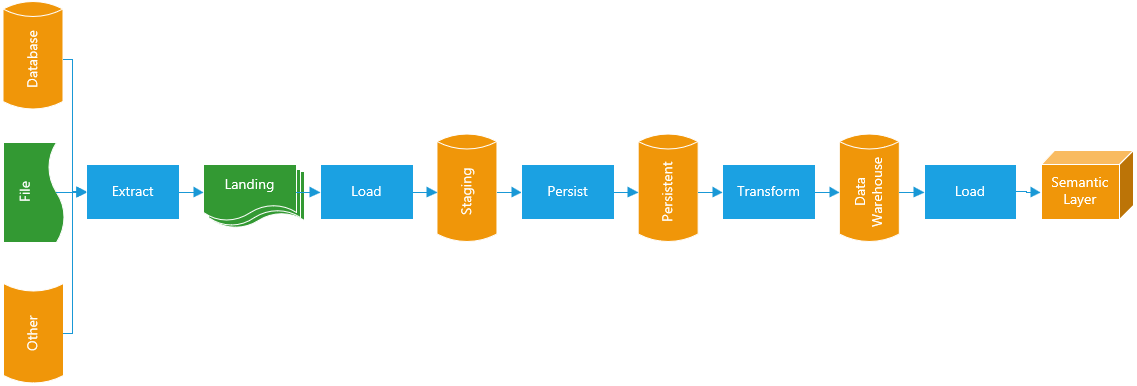

These scenarios require engineers with specialized expertise in those systems to develop ways for unlocking the data. Legacy systems, in particular, may not offer easily accessible methods to extract data. Even when these databases use a commonly-used query language, such as SQL, their proprietary implementations means pipelines must use different queries to accomplish the same task with other sources. Relational database management systems will have internal query processes that generate data sets for export. Modern systems will have APIs that allow external systems to request data programmatically. Each source has its own way of exporting data. Extraction is rarely as simple as it sounds. In this phase, the pipeline will pull data from the discovered sources and copy it into the staging area. These models will define the data processing steps needed to make the source data conform to the data warehouse’s requirements.Īt the same time, the engineer will request the creation of a staging area, a data store separate from sources or destinations where transformation can proceed without impacting other systems.

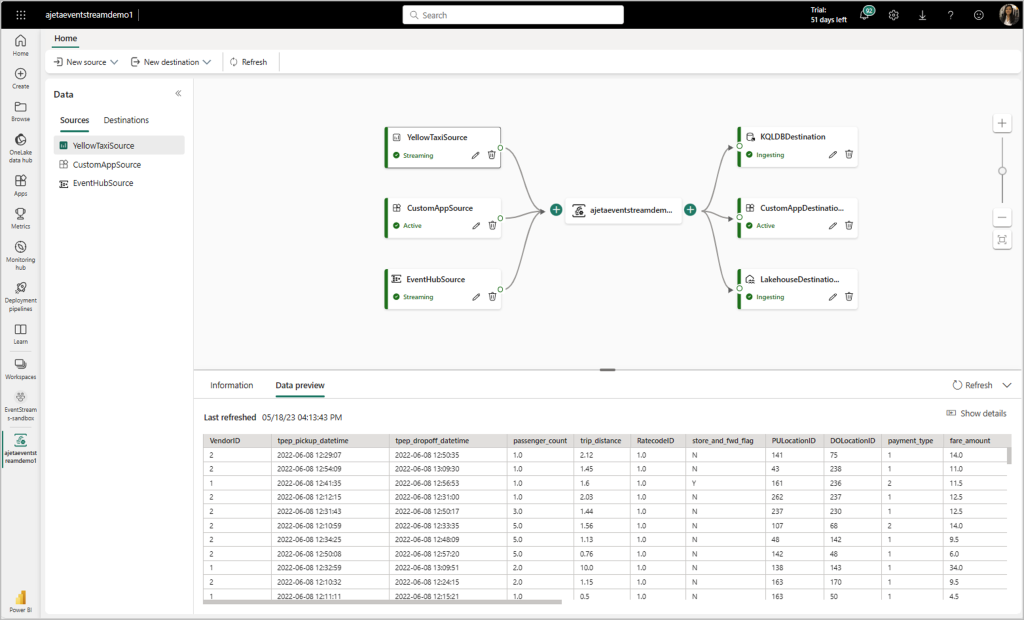

The engineer will create data models describing the source data’s schema, data types, formats, and other characteristics. With clear direction, an engineer will explore the company’s on-premises and cloud infrastructure for the most relevant source systems and data sets. The data management team will lead the resulting project, collecting input from stakeholders to define the project’s goals, objectives, and metrics. That need may be acquiring of a new data source, changes to an existing source, or decision-makers’ demands for insights into business questions. A business need triggers pipeline development. Traditional ETL processes are multi-stage operations that ensure data entering a repository consistently meets governance and quality standards. This is why data engineers build ELT pipelines to fill their data lakes. Since lakes store raw data, data pipelines did not need to transform data upon ingestion, leaving the data processing to each use case. In addition, lakes can store more diverse data types in their raw formats. Able to leverage commodity, cloud-based data storage, lakes are more scalable and affordable. Data warehouses lacked the flexibility and scalability to handle the ever-growing volumes, velocities, and complexities of enterprise data. Over time, a rich ecosystem of ETL tools arose to streamline and automate data warehouse ingestion.Īs the era of big data emerged, this architecture began to show its weaknesses. Data engineers had to pull information from the company’s diverse sources and impose consistency to make the data warehouse useful. Data became less accessible, requiring considerable time, effort, and expense to generate valuable insights about the business.ĭata warehousing attempted to resolve this situation by creating a central repository from which data analysts could easily discover and use data to support decision-makers.

Moreover, inconsistent governance allowed domains to build their data systems to varying quality standards, schema, format, and other criteria. Each database management system formatted data differently, used different querying methods, and did not share data easily. This guide will compare and contrast these data integration methods, explain their use cases, and discuss how data virtualization can streamline pipeline development and management - or replace pipelines altogether.Īs enterprise data sources proliferated, information architectures fragmented into isolated data silos. Load: The loading process deposits data, whether processed or not, into its final data store, where it will be accessible to other systems or end users, depending on its purpose.Ĭommonly used to populate a central data repository like a data warehouse or a data lake, ETL and ELT pipelines have become essential tools for supporting data analytics. Additional data processing steps may cleanse, correct, or enrich the final data sets. Transform: Data transformation involves converting data from its source schema, format, and other parameters to match the standards set for the destination. Extract: Data extraction is acquiring data from different sources, whether Oracle relational databases, Salesforce’s customer relationship management (CRM) system, or a real-time Internet of Things (IoT) data stream.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed